27 Apr 2026

When gene-editing technology escapes the test tubes of a laboratory and enters the uncontrolled wilderness, and humans are no longer the sole “creators”, what will the world become?

Recently, a research project from the Department of Industrial Design at Xi’an Jiaotong-Liverpool University utilised Artificial Intelligence to construct a prophecy of the future. In the paper titled “From Concept to Video: An End-to-End AI-Assisted Approach for Storytelling”, the research team employed a full-pipeline generative AI workflow to focus on the topic of genetic modification. They produced a short film exploring ethical dilemmas, systematically examining the potential of worlding with artificial intelligence.

The paper was accepted to the 12th International Conference on Digital and Interactive Arts (ARTECH 2025), held in Portugal in November 2025, and has been published by the Association for Computing Machinery (ACM).

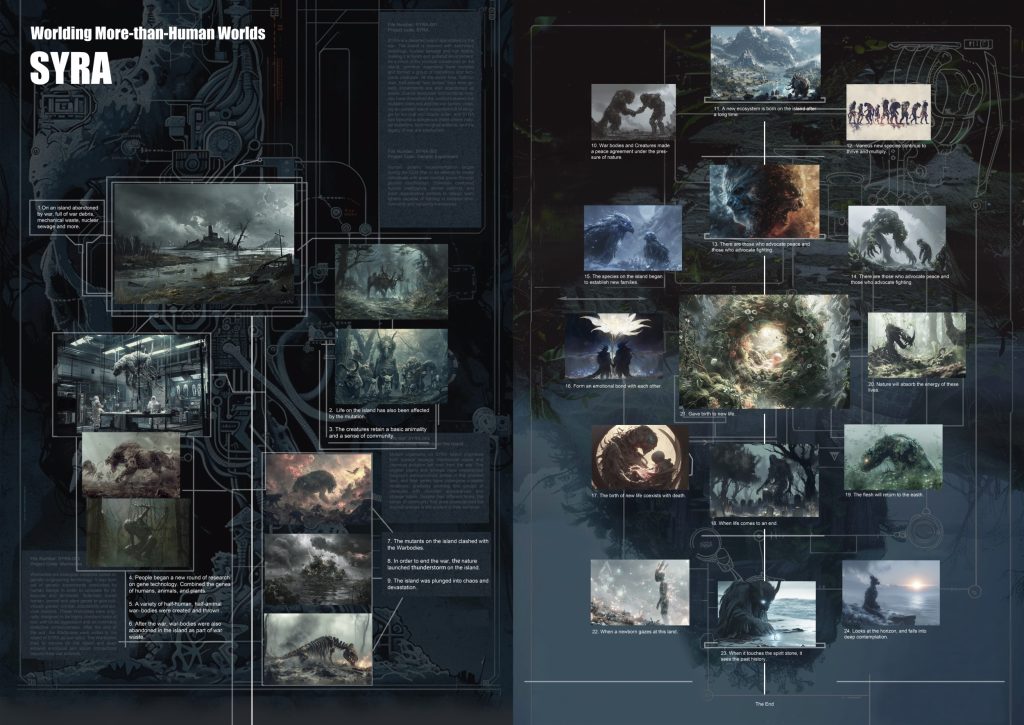

Image-based storyboard of the project

Aven Le Zhou, an Assistant Professor in the Department of Industrial Design and the paper's corresponding author, explained that the project originated from an assignment titled "Worlding More-than-Human Worlds" in the course IND 406 "Body, Space, and Machine".

Building on the successful coursework, he invited students Yi’an Tang and Wenxiao Zhu to continue deepening the research as first authors during the summer break. They reflected and summarised their practical experience into a methodology, which ultimately led to the published paper.

The research does more than just present an ecological spectacle of an island post-genetic warfare; it deeply analyses how non-human life forms undergo self-evolution and ecological restoration under the risks of biotechnological failure.

Rooted in post-humanism and ecological resilience theories, the project focuses on how non-human life adapts, evolves, and interacts beyond human. By integrating visual storytelling, generative imagery, and short video production, the work fills a gap in mainstream media regarding the entangled narratives of biotechnology, conflict, and ecological evolution. The resulting AI short film is not merely a technical demonstration but a profound reflection on the ethical boundaries of genetic engineering and the intervention of design in future narratives.

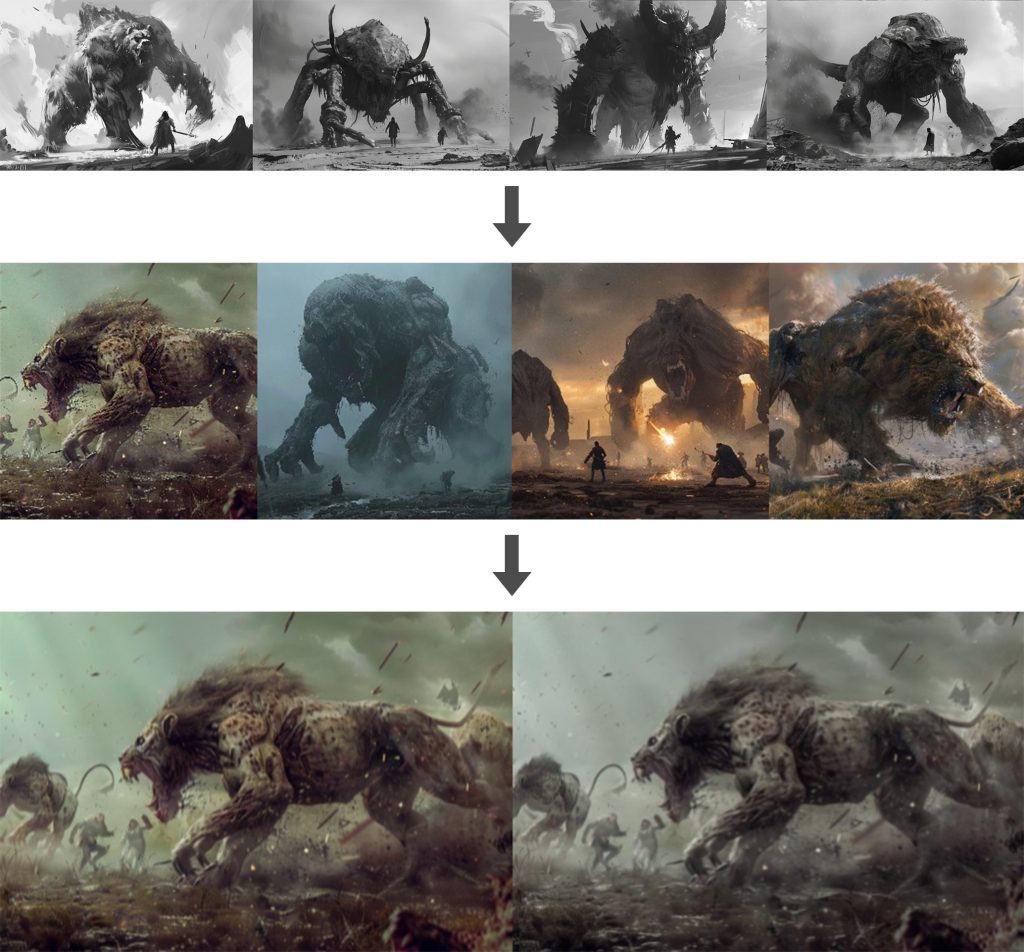

Progressive refinement of image storyboards across production stages

Technologically, the study demonstrates a coherent creative workflow: utilising generative tools to achieve the entire process from textual concept to image generation and finally to video execution. This full-process AIGC pipeline efficiently presents complex ethical themes, transforming abstract theories into high-impact visual experiences.

Wenxiao Zhu says that neither she nor Yi’an Tang are from animation or film-related programmes. Therefore, they relied entirely on AI tools to advance their creativity and ground their ideas.

“During the creative process, it was easy to get distracted by chasing ‘cool’ AI special effects and lose sight of the research focus,” Wenxiao says.

“Luckily, Aven helped to keep us on track, focusing on the core of ‘how non-human life evolves’. He guided us in how to reflect on our practice and organising our trial-and-error experiences into a rigorous research method, allowing us to gain substantial practice-based research experience. Attending the international conference also allowed us to learn about cutting-edge academic content and broaden our horizons.

“Furthermore, through the process of developing the project, we truly realised that while AI can quickly generate visuals and weave stories, it cannot make ethical choices for us, nor can it replace independent thinking and self-reflection.”

Aven Le Zhou, Wenxiao Zhu, and Yi’an Tang at ARTECH 2025

Looking ahead, the team proposed three major research directions: cross-cultural ethical storytelling to explore how different societies and cultures interpret biotechnology through localised perspectives; integrating AIGC tools into game engines or XR systems for interactive experiences, allowing users to guide and shape the evolution of virtual worlds in real-time; and training domain-specific AIGC models to fine-tune Large Language Models (LLMs) on specific themes such as genetic ethics and ecological aesthetics to enhance accuracy and reduce ambiguity in generated content.

By Yi Qian

Translated by Yi Qian

Images provided by the Department of Industrial Design

27 Apr 2026