08 May 2026

At a key stage in the evolution of artificial intelligence (AI) from basic “recognition” to deeper “understanding”, a research team from Xi’an Jiaotong-Liverpool University (XJTLU) has made significant advances in computer vision.

The IEEE/CVF Conference on Computer Vision and Pattern Recognition 2026 (CVPR 2026) recently announced its paper acceptance results. The MMT Lab, led by Professor Jimin Xiao at XJTLU’s Department of Intelligent Science, had 11 papers accepted, including seven Main Track papers and four Findings papers.

As one of the world’s most influential conferences in computer vision, CVPR is known for its rigorous acceptance standards. The research it publishes often reflects the field’s most cutting-edge developments and the innovation capacity of leading institutions.

“Compared with previous research, which focused more on single scenarios and individual task accuracy, this year’s work places greater emphasis on AI’s real-world adaptability and practical applications,” says Professor Xiao.

He notes that the acceptances mark the team’s seventh consecutive year of success at CVPR. This year, the team not only increased the number of accepted papers, but also presented more systematic research spanning multi-scenario applications, multimodal learning, and continual learning.

Finding errors by learning the normal

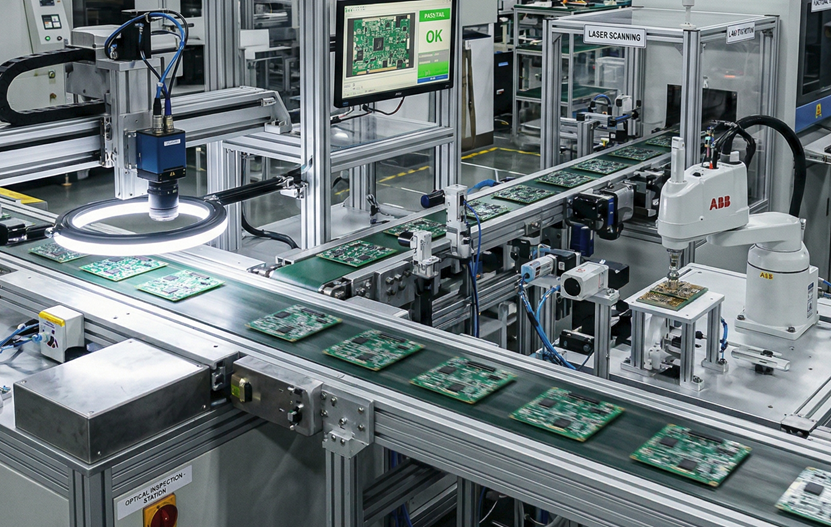

One of the team’s standout breakthroughs focuses on industrial quality inspection. Traditional AI systems often fail to detect novel defects because they have not encountered them before. Instead of attempting to catalogue every possible flaw, the MMT Lab developed a residual learning method that teaches AI the “stable features” of a perfect product. By comparing a current item with a normal sample, the system can identify even subtle and previously unseen anomalies.

The team also introduced a multimodal detection method that works without additional training. This allows AI to analyse images across different data types and identify targets in complex environments, improving both flexibility and efficiency in industrial inspection. Because the model does not require separate training for each scenario, it can be rapidly adapted to a wide range of applications.

Lowering barriers in data-scarce fields

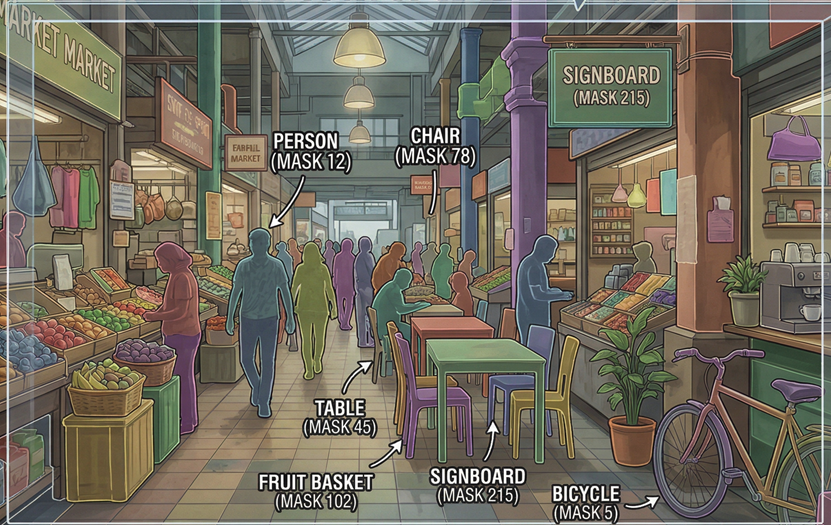

High-quality image segmentation traditionally depends on large amounts of detailed manual annotation, which is both time-consuming and expensive. This is particularly challenging in data-scarce fields such as medical imaging and satellite remote sensing.

To address this, the team developed a “frequency-aware framework” that mimics human perception. The system first focuses on low-frequency information, such as shapes and colours, before refining high-frequency details like textures and edges. This coarse-to-fine approach produces segmented images that are both complete and precise.

The researchers also addressed limitations in language-guided segmentation. Using a “dynamic assembly mechanism”, the AI can flexibly infer which combinations of shapes and textures best match a text description, even when encountering unfamiliar objects.

The research could significantly lower the barriers to applying AI in specialised fields, enabling industries with limited data resources to benefit from high-precision visual recognition technologies.

Solving the ‘forgetting’ problem

Can AI learn new knowledge without forgetting what it has already learned? This is one of the central challenges in continual learning.

To address this, the MMT Lab shifted its focus from simply preventing forgetting to helping AI “remember the old to learn the new”. By simulating human processes of review and summarisation, the system can distil essential knowledge from previous experiences when adapting to new scenarios.

The team also proposed an innovative pattern calibration approach that reduces performance fluctuations caused by conflicts between old and new knowledge, helping maintain AI stability during long-term learning.

A collaborative effort

The 11 papers accepted to CVPR 2026 were produced through collaborations between Professor Xiao’s team at XJTLU and researchers from the University of Liverpool, Shanghai Artificial Intelligence Research Institute, Beijing Jiaotong University, and other institutions in China and abroad.

The research spans multiple frontier areas, including anomaly detection, image segmentation, weakly supervised learning, large multimodal models and continual learning, demonstrating the team’s sustained and systematic work across key areas of computer vision.

By Huatian Jin

Translated by Xiangyin Han

Edited by Xinmin Han

08 May 2026